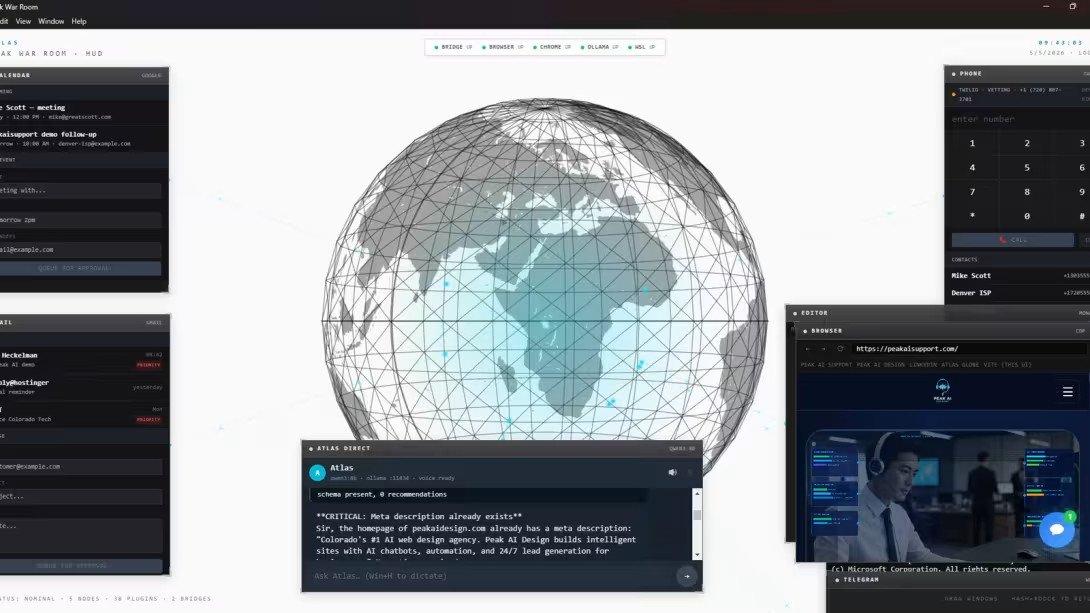

Summary: Atlas is the local-first AI operations stack we built and run. Local LLM as the brain, plugin-extensible runtime, Iron Man-style desktop HUD, voice-driven, self-extending. ~95% of work runs on your hardware; ~5% bridges to cloud for heavy reasoning. The architecture is open at github.com/drelf/peak-ai-ops-architecture.

The problem we solved

Most AI assistants today are cloud-hosted, send your business data to OpenAI or Anthropic, can't be customized below the prompt layer, and stop working when you go offline. For a small business running operations day-to-day — drafting LinkedIn posts, fact-checking customer emails, dispatching support calls, monitoring uptime, generating SEO content — that model is wrong on three fronts: privacy, control, and cost shape.

Local-first AI ops solves all three at once: brain runs on your hardware, data never leaves, every routing decision is your code.

How Atlas works

Atlas is a Node.js HTTP runtime exposing 50+ plugins to a local LLM (Ollama running qwen3:8b on consumer GPU). Every plugin call passes through a tri-state permission gate before execution.

- Autonomous bucket — read-only ops, drafts, internal compute, publishing on your own properties. Run + audit log, no prompts.

- Requires-approval bucket — outbound to non-you humans (email, SMS), code commits, any spend, public-asset destructive ops. 403 gate, routed to Telegram inline-keyboard approval.

- Forbidden bucket — credentials, banking, gov benefits, force-push to main, db drops. Refuse + alert. Default-deny on unknown tools.

This means Atlas can run autonomously without becoming a security incident. Adding a plugin without classifying it is forbidden by default.

Self-extension

Atlas has two meta-plugins that let it grow new capabilities on demand:

- plugin-builder — given a description of a missing capability, dispatches to a real coding agent (Claude Code via the open-source claude-bridge-mcp) which has filesystem access, reads template plugins, writes the new file, updates the routing catalog. Returns a build report.

- agent-builder — same shape, but spawns a sub-agent with its own LLM loop, tool subset, and memory namespace. The agent grows specialists for specific domains.

Hallucination-proof via Context7

Local LLMs (and even frontier ones) hallucinate API signatures because their training data is stale. The fix: connect Context7 — a hosted MCP server with current docs for 9,000+ libraries. When Atlas dispatches a coding task, Context7 supplies the current React API, Stripe webhook signature scheme, Telegram inline keyboard JSON shape. Free, no key required. Eliminates the most common failure mode of LLM-generated integration code.

The HUD — Peak War Room

The interface is a desktop app (Electron) with floating draggable windows around a 3D globe. Chat pane, Monaco code editor, real terminal PTY, browser pane, Telegram embed, calendar, email composer, softphone dialer. Status strip showing daemon health. The aesthetic is intentional — operating Atlas should feel like you're running a small command center, not chatting with a bot.

Voice loop

Speech in: native OS dictation (Windows: Win+H modern engine). Speech out: Microsoft Edge neural TTS — free, hosted, no key, ~50 voice options. Default British male (Ryan Neural) ships Jarvis-tone. Optional Whisper / OpenAI upgrade if you want.

Open-source companions

Two MCP bridges shipped to the public domain alongside Atlas:

- atlas-bridge-mcp — bridges any MCP-compatible AI agent to a browser-agent / puppeteer HTTP backend

- claude-bridge-mcp — bridges MCP agents to a Claude Code CLI subprocess for autonomous coding-task dispatch

Both are MIT-licensed and pip/npx-installable. Together they make agent-to-backend dispatch a one-config-line operation.

What ships next

- Telegram inline-keyboard approval flow for bucket-2 actions

- Self-heal cron — when scheduled jobs fail, Atlas auto-spawns a coding-agent dispatch to diagnose + propose a fix → human approves via Telegram → fix applies

- n8n agent layer — sub-agents migrate from in-process plugins to n8n workflows for visual debugging, cron triggers, and isolated state

- Multi-tenant config — one Atlas instance can run ops for multiple businesses

- Wake word — opt-in "Hey Atlas" via on-device wake-word library

Architecture overview is open

The full architecture is published at github.com/drelf/peak-ai-ops-architecture — patterns and philosophy, no operational specifics. Companion repos atlas-bridge-mcp and claude-bridge-mcp are MIT-licensed.

Comments